The JIVE team is working on ethical aspects of human-AI collaboration, such as bias, privacy and transparency.

We are focusing on developing text rewriting techniques that normalise, de-bias and de-identify text to build responsible AI models that will protect sensitive information of individuals. This includes Reinforcement Learning (RL) methods applied to Large Language Models (LLMs). Such models could serve as digital twins to monitor and improve mental health of vulnerable individuals. We also work on transparency of our models conveying their decisions in collaborative protocols via reporting uncertainty and bias estimates. This includes Bayesian Deep Learning techniques. We also work with integration of LLMs into agents in language acquisition problems, educational and healthcare contexts. Beyond mental health, we also work with legal data.

Videos

Publications

- Ignashina, M., & Ive, J. (2024). Safe Training with Sensitive In-domain Data: Leveraging Data Fragmentation To Mitigate Linkage Attacks. arXiv.

- Rahaman, M. A., & Ive, J. (2023). Source Code is a Graph, Not a Sequence: A Cross-Lingual Perspective on Code Clone Detection. To appear in NAACL Student Research Workshop (SRW) 2024.

- Alhamed, F., Ive, J., & Specia, L. (2024). Classifying Social Media Users Before and After Depression Diagnosis via their Language Usage: A Dataset and Study. To appear in LREC-COLING 2024.

- Alhamed, F., Ive, J., & Specia, L. (2024). Using Large Language Models (LLMs) to Extract Evidence from Pre-Annotated Social Media Data. (232-237). 9th Workshop on Computational Linguistics and Clinical Psychology (CLPsych 2024).

- Popat, R., & Ive, J. (n.d.). Embracing the uncertainty in human-machine collaboration to support clinical decision making for Mental Health Conditions. Frontiers in Digital Health, 5, 1188338.

- Wu, H.-Y., Zhang, J., Ive, J., Li, T., Gupta, V., Chen, B., & Guo, Y. (2022). Medical Scientific Table-to-Text Generation with Human-in-the-Loop under the Data Sparsity Constraint. NeurIPS 2022 Workshop SyntheticData4ML.

- Ive, J. (2022). Leveraging the potential of synthetic text for AI in mental healthcare. Frontiers in Digital Health, 4, 1010202.

- Anuchitanukul, A., & Ive, J. (2022). SURF: Semantic-level Unsupervised Reward Function for Machine Translation. NAACL (25.2% acceptance rate).

- Liu, H., Seedat, N., & Ive, J. (2022). Modeling Disagreement in Automatic Data Labelling for Semi-Supervised Learning in Clinical Natural Language Processing. Under review.

- Ive, J., Li, A. M., Miao, Y., Caglayan, O., Madhyastha, P., & Specia, L. (2021). Exploiting Multimodal Reinforcement Learning for Simultaneous Machine Translation. EACL (24.7% acceptance rate).

- Ive, J., Viani, N., Kam, J., Yin, L., Verma, S., Puntis, S., Cardinal, R. N., Roberts, A., Stewart, R., & Velupillai, S. (2020). Generation and evaluation of artificial mental health records for Natural Language Processing. Nature Digital Medicine (Rank 1st quartile, Impact factor 15.2).

- Ive, J., Madhyastha, P., & Specia, L. (2019). Distilling Translations with Visual Awareness. ACL 2019 (25.7% acceptance rate).

News

What will we be saying about AI in ten years’ time?

Séminaire proposé par Julia Ive, Queen Mary University, invitée à ETIS en juin 2024.

Read more..

Addressing Socio-technical Limitations of LLMs for Medical and Social Computing

Dr Julia Ive represents the Responsible Ai UK Keystone project on Addressing Socio-technical Limitations of LLMs for Medical and Social Computing (AdSoLve) led by Prof Maria Liakata at CogX Los Angeles.

Read more..

What will we be saying about AI in ten years’ time?

Dr Julia Ive and Professor Gianluca Sergi will be discussing what crisis points may have emerged recently and what AI governance structures might look like.

Read more..

The promise of AI: working across disciplines for the public good

Dr Julia Ive will be presenting a seminar at Queen Mary University of London with David Leslie and Dr Isadora Cruxen.

Read more..

Mental Health Monitoring workshop

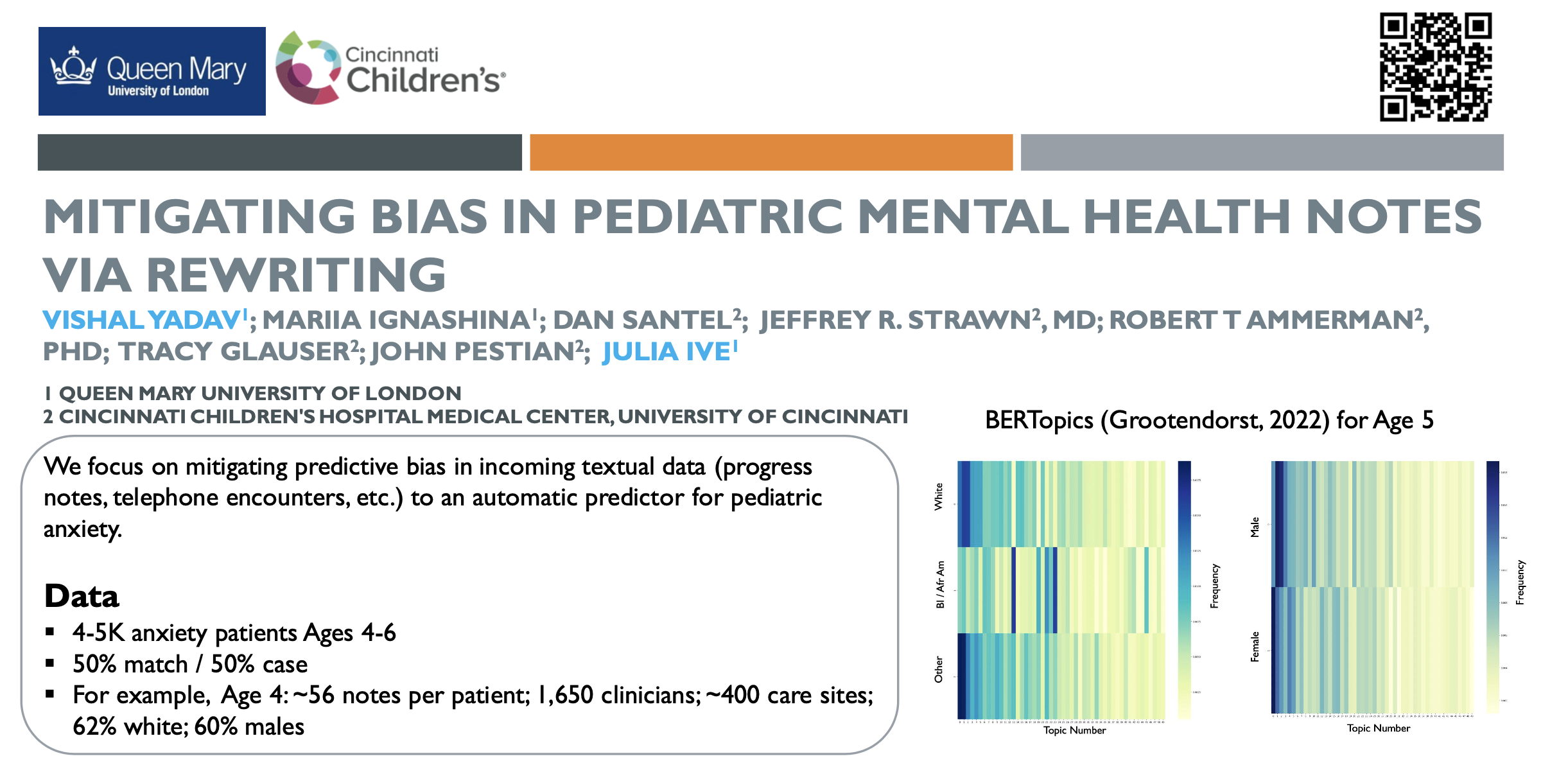

Julia and Vishal presented the poster at the AI for Mental Health Monitoring workshop at the Fringe Event of Alan Turing Institute.

Read more..

Artificial Intelligence in Healthcare: Shaping the Future of Science (AI4H) Conference, University of Padua, Padova, Italy

Julia and Mariia present the poster for Mitigating Bias in Pediatric Mental Health Notes via rewriting.

Read more..

Regulating AI in Digital Mental Health Forum

Dr Julia Ive will be speaking at the Regulating AI in Digital Mental Health Forum, AI Turing Fringe Event.

Read more..The Team

Dr Julia Ive is the lab lead and an expert in guiding foundation models for text generation with Reinforcement Learning (RL). Her track record includes a major scientific breakthrough in the domain of generating synthetic mental health text. She pioneered this in 2018 as a result of her pilot project with her colleagues from Kings College London, Cambridge and Oxford. The methodology stemming from the project was published in the prestigious high-rank Nature Digital Medicine journal. At Queen Mary University of London (QMUL), she has been the module organiser of the Artificial Intelligence course at MSc level. She has taught Neural Networks and Natural Language Processing both at Imperial College London, with Prof Lucia Specia, and at the Department of Computing at Queen Mary University of London. Beyond teaching for university students she has developed and delivered a series of courses for pre-university level students (18-19 years, Oxford summer courses), as well as industry practitioners; for example the online ResponsibleAI course at The Alan Turing Institute.

Teaching

- 2022 – 2024 MSc, Neural Networks & NLP, Queen Mary University of London

- 2021 – 2024 MSc, Artificial Intelligence, Queen Mary University of London

- 2020 – 2021 MSc, NLP (lectures on text classification and Transformers), Imperial College London, module organiser: Prof Lucia Specia

- ResponsibleAI course at Turing. For the interested experts, a key outcome is being familiar with the main techniques of designing explainable (xAI) and transparent systems and being able to use them in practice for AI; for AI practitioners in particular, another key outcome is being able to build NLP classification models which explain their decisions using natural language (Github).

Mariia has a Master of Science with Distinction in Computer Science, Queen Mary University of London, and a Bachelor of Science in Natural Language Processing, Higher School of Economics University.

Mateusz has a Bachelor in Electronic & Electrical Engineering, University College London, and is studying towards a PhD in Electronic Engineering, Queen Mary University of London. He is currently working on Zero shot text anonymisation using large language models, open ended search to generate prompts, and alignment of foundation models.

Vishal has a Masters in Artificial Intelligence in Computer Vision and Robotics with Distinction, and a Bachelors in Computer Science from RGPV University India. He is currently working on Identifying and mitigating bias in EHRs using generative AI, Tracking progress in Cognitive behaviour therapy in subject to physical activity / exercises, and Understanding the relationship between Understanding privacy and its impact on chatbots.